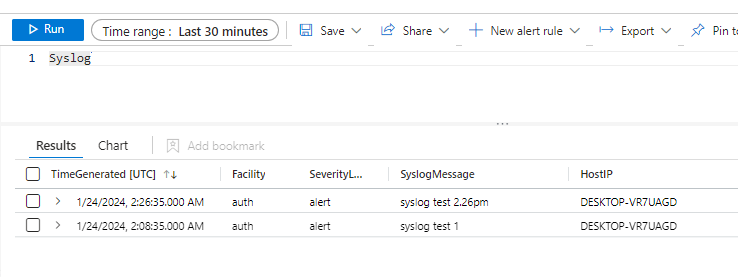

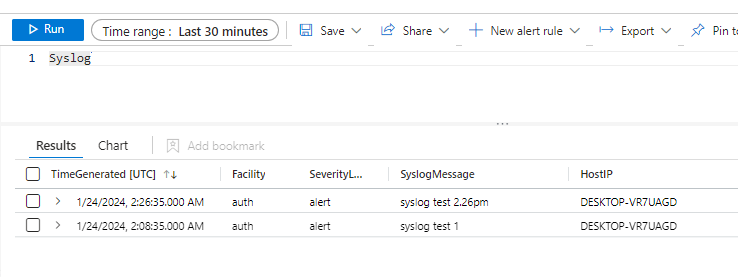

This example of using REST to populate Syslog is based off a Microsoft guide for using Logstash:

https://learn.microsoft.com/en-us/azure/sentinel/connect-logstash-data-connection-rules#create-a-sample-file

This example of using REST to populate Syslog is based off a Microsoft guide for using Logstash:

https://learn.microsoft.com/en-us/azure/sentinel/connect-logstash-data-connection-rules#create-a-sample-file

Fluent-bit natively supports forwarding data to Event Hubs with Kafka support built in with the Linux packages. With Windows, this module was left out of the standard package simply because no testing against the Apache kafka redistributable had occurred.

Using Fluent-bit with Windows and ADX provides a cost effective way of harvesting large amounts of security data from monitored systems.

Official installers for the latest fluent-bit package are available for download here:

Log Analytics Query Packs allow for commonly used queries to be saved and made accessible within Sentinel.

When a staff member with contributor permissions saves their first query from within Log Analytics to the default query pack, the default resource group and default query pack name are created for the subscription.

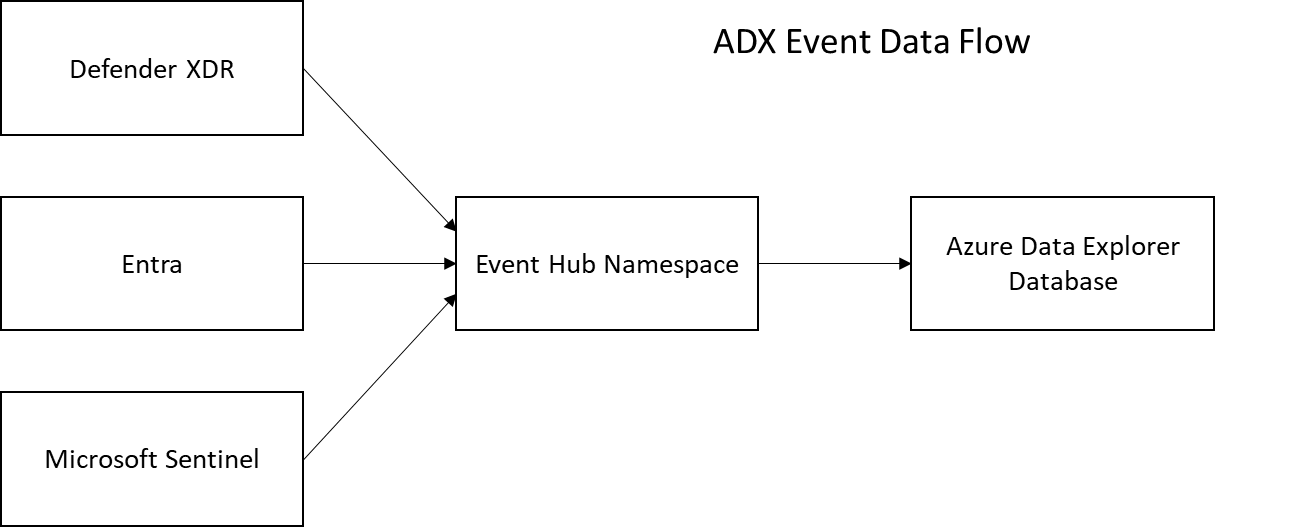

With Azure Data Explorer we have to manually define the schema for each table created within the service.

The standard method for organising enterprise scale data ingest is by using Event Hubs (Kafka) as a means to regulate traffic volume and to provide a queued cache for ensuring data isn't lost if issues impact the ADX cluster.

Security Operations require access to large volumes of historical syslog data to be able to trace incursions after an event has occurred. Azure Data Explorer (ASX) is ideal as a Sentinel integrated, low-cost system for archiving this data.

The following is an rsyslog template for parsing Common Event Format messages and writing output to a dedicated log file. The created log file can be transformed with the Azure AMA agent (JSON format) for ingestion into Log Analytics.

For Microsoft Sentinel a 'syslog forwarder' that acts as a centralisation point for linux system and the Azure Monitor Agent (AMA) forwards messages received to a designated Log Analytics Workspace. AMA provides the ability to filter logs using KQL queries at source, protentially reducing cost for the eingestion of a large amount of noise.

AMA does have a catch that's in the fine-print of its billing:

Microsoft's strategy for allowing integration with security entities and incidents is through the use of Playbooks (Logic Apps). Any engineers who have been involved in complex automation will prefer to script instead of using workflows. The only form of automation avalable for use within the console of Sentinel are Playbooks.

There can be a number of reasons for wanting to backup Azure (or Office 365) to GitHub. As an increasing number of SaaS services (like Microsoft Sentinel) are designed for being configured and deploying Azure services through the console, traditional CI/CD code promotion doesn't work.

For some years I've been backing up my Azure subscription to Github using automated workflows. It ensures that I can compare changes in my subscription over time and by using MarkDown I can look through backups to reference previous versions of KQL queries.

At times it's necessary to be able to get tokens for different Microsoft cloud platforms without having access to Developer kits or .dll modules. This function shows how different tokens can be gained using passwords, interactive OICD login, passwords etc.

It's one of my best examples of how agile code development needs refactoring after years of ongoing updates. It will produce a hashtable that I can use in REST calls to the various Microsoft portals like Azure or Office 365.